Hand Gesture Detection ML Application

Description

The Hand Gesture Detection application is an ML-based computer vision solution designed for real-time gesture recognition with hand detection, single-hand tracking, and hand key-point estimation. The application detects hands in every frame, tracks one hand across frames, identifies one key point (at the center) for each hand location, and classifies the hand pose into one of the supported gesture classes. This use case supports HD resolution.

Prerequisites

Choose one setup path:

Hardware Requirements

Sensor Adapter (included with the Astra Machina Micro kit)

OV5647 Camera Sensor

Building and Flashing the Example using VS Code

Use the VS Code flow described in the SR110 guide and the VS Code Extension guide:

Build (VS Code):

Open Build and Deploy -> Build Configurations.

Select hand_gesture_detection in the Application dropdown.

Build with Build (SDK + App) for the first build, or Build App for rebuilds.

Flash (VS Code):

Use Image Conversion to generate the flash image.

In Image Conversion, open Advanced Configurations and edit

NVM_data.json.Set model flash offsets in

NVM_data.json:image_offset_Model_A_offset:00607000image_offset_Model_B_offset:00737000

In Image Flashing (SWD/JTAG), flash the model binaries first:

hand_gesture_detection_flash(1280x704).binat0x607000hand_gesture_detection_flash(320x320).binat0x737000

Flash the generated firmware image (

B0_flash_full_image_GD25LE128_67Mhz_secured.bin).

Building and Flashing the Example using CLI

Use the CLI flow described in the SR110 guide:

Build (CLI):

From

<sdk-root>/examples, build the example:cd <sdk-root>/examples export SRSDK_DIR=<sdk-root> make cm55_hand_gesture_detection_defconfig BOARD=SR110_RDK BUILD=SRSDK

Flash (CLI):

Activate the SDK venv (required for image generation tools):

# Linux/macOS source <sdk-root>/.venv/bin/activate # Windows PowerShell .\.venv\Scripts\Activate.ps1

Generate the flash image:

cd <sdk-root>/tools/srsdk_image_generator python srsdk_image_generator.py \ -B0 \ -flash_image \ -sdk_secured \ -spk "<sdk-root>/tools/srsdk_image_generator/B0_Input_examples/spk_rc4_1_0_secure_otpk.bin" \ -apbl "<sdk-root>/tools/srsdk_image_generator/B0_Input_examples/sr100_b0_bootloader_ver_0x012F_ASIC.axf" \ -m55_image "<sdk-root>/examples/out/sr110_cm55_fw/release/sr110_cm55_fw.elf" \ -flash_type "GD25LE128" \ -flash_freq "67"

Flash model binaries first:

cd <sdk-root> python tools/openocd/scripts/flash_xspi_tcl.py \ --cfg_path tools/openocd/configs/sr110_m55.cfg \ --image examples/SR110_RDK/vision_examples/uc_hand_gesture_detection/models/hand_gesture_detection_flash(1280x704).bin \ --flash-offset 0x607000 python tools/openocd/scripts/flash_xspi_tcl.py \ --cfg_path tools/openocd/configs/sr110_m55.cfg \ --image examples/SR110_RDK/vision_examples/uc_hand_gesture_detection/models/hand_gesture_detection_flash(320x320).bin \ --flash-offset 0x737000

Flash the firmware image:

cd <sdk-root> python tools/openocd/scripts/flash_xspi_tcl.py \ --cfg_path tools/openocd/configs/sr110_m55.cfg \ --image tools/srsdk_image_generator/Output/B0_Flash/B0_flash_full_image_GD25LE128_67Mhz_secured.bin \ --erase-all

Running the Application using VS Code Extension

Windows note: Ensure the USB drivers are installed for streaming. See the Zadig steps in

SR110 Build and Flash with VS Code.

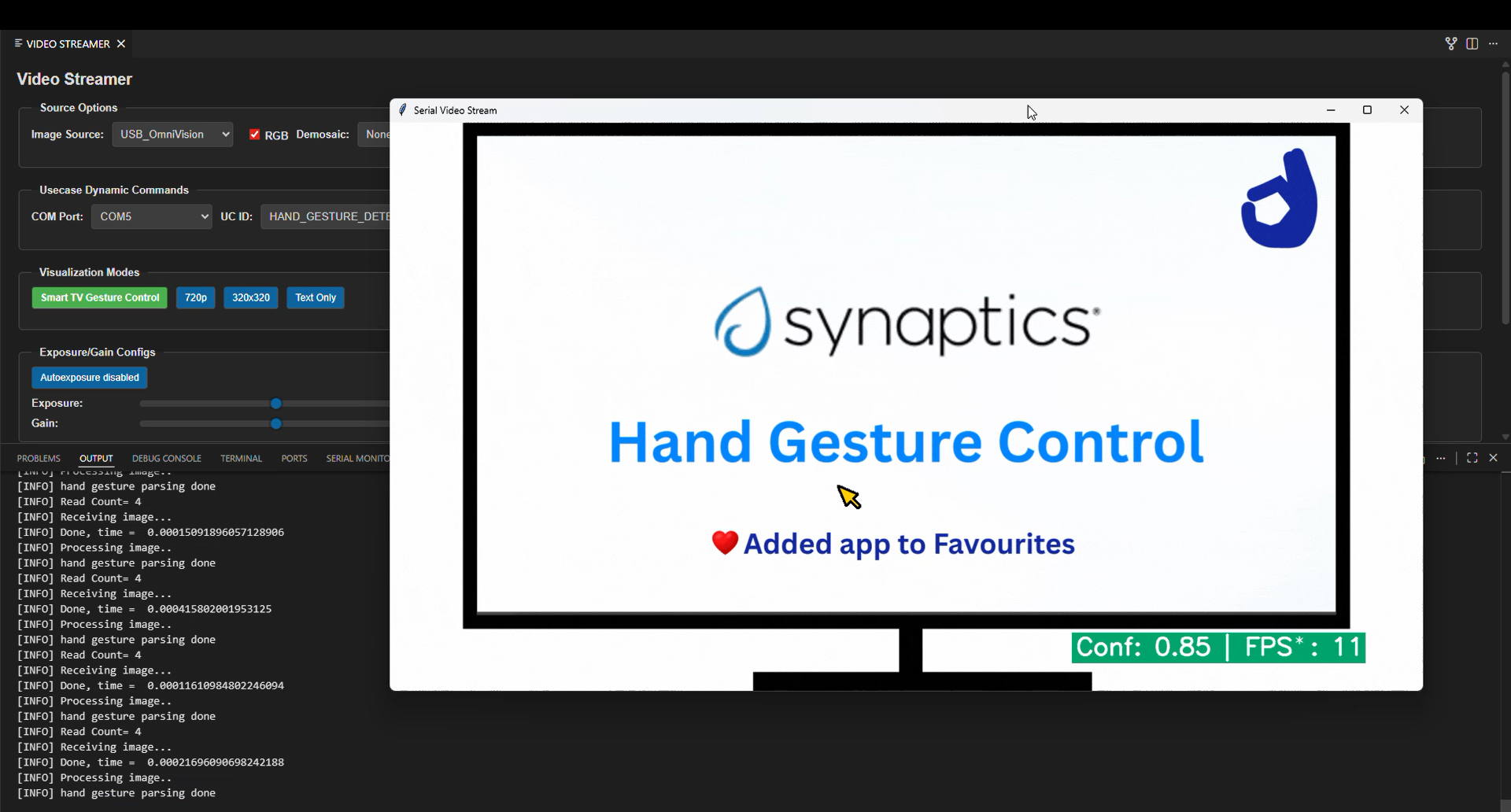

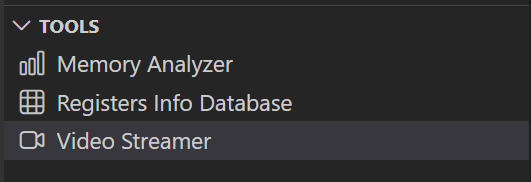

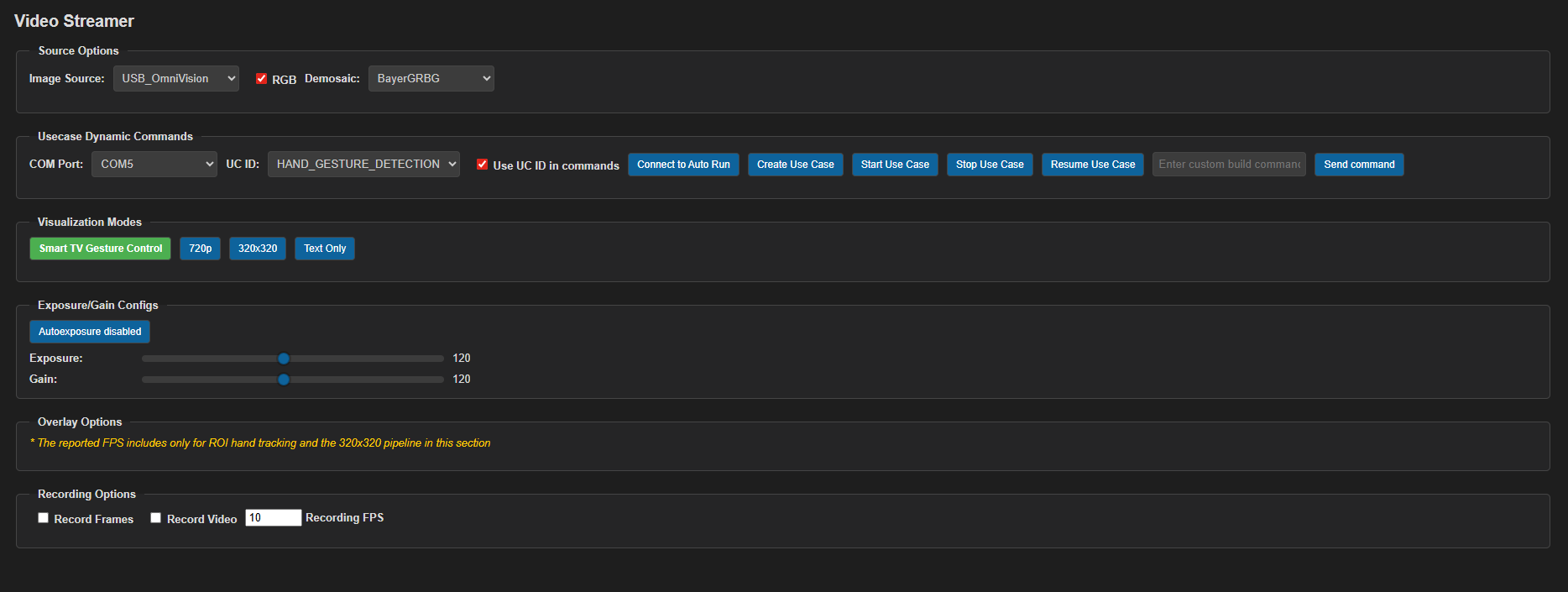

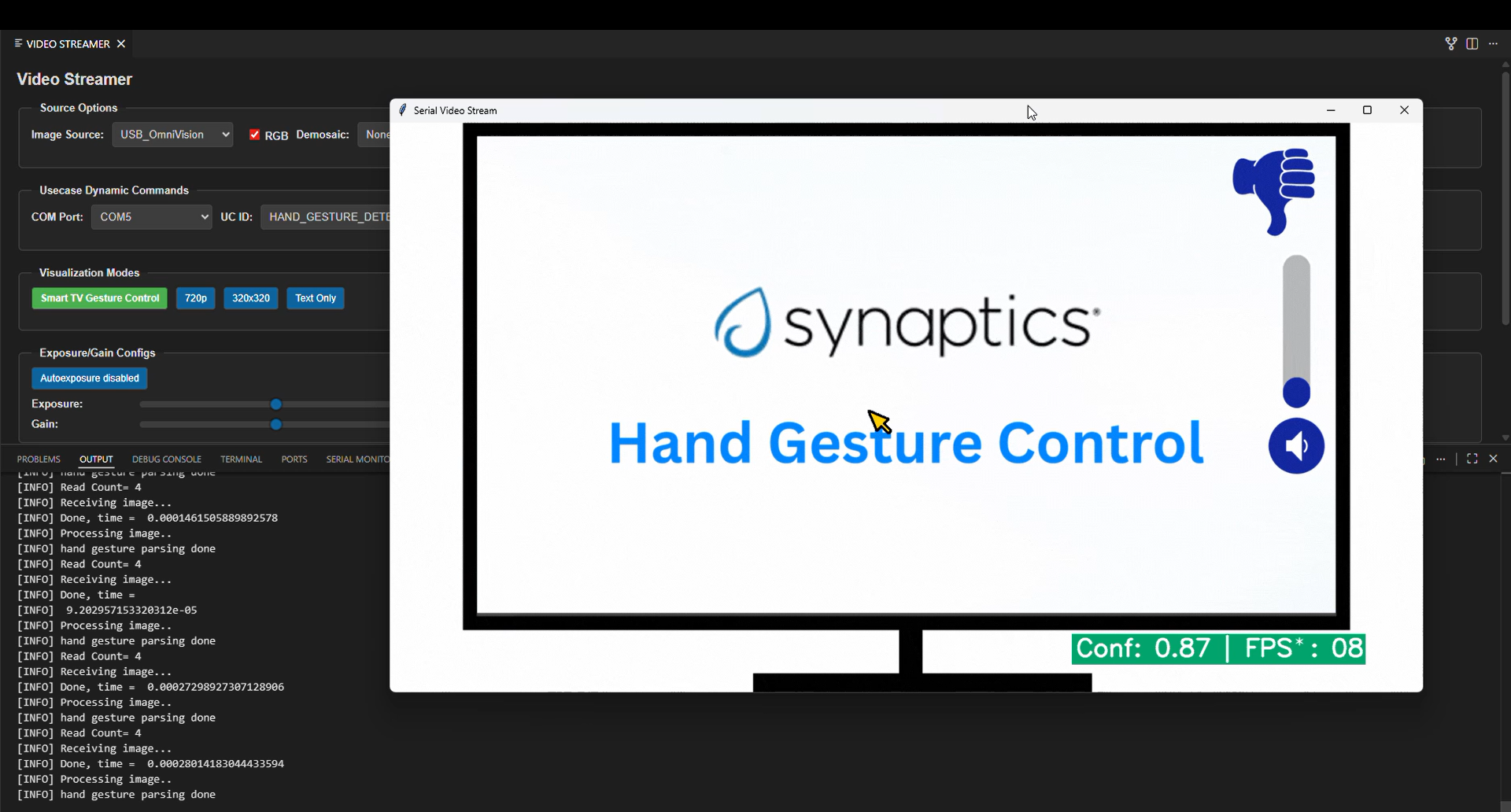

In VS Code, open Video Streamer from the Synaptics sidebar.

For logging output, click SERIAL MONITOR and connect to the DAP logger port on J14.

To make it easier to identify, ensure only J14 is plugged in (not J13).

The logger port is not guaranteed to be consistent across OSes. As a starting point:

Windows: try the lower-numbered J14 COM port first.

Linux/macOS: try the higher-numbered J14 port first.

If you do not see logs after a reset, switch to the other J14 port.

In the Video Streamer dropdown, select the J13 COM port.

Plug in J13 and press RESET on the board.

Windows: select the newly enumerated COM port.

Linux/macOS: select the lower-numbered COM port of the two newly enumerated ports.

Use the Video Streamer controls:

a. Select HAND_GESTURE_DETECTION from the UC ID dropdown.

b. Set RGB Demosaic to BayerGBRG.

c. Click Create Use Case.

d. Click Start Use Case (a Python window opens and the video stream appears).

For logs, use the LOGGER tab when needed.

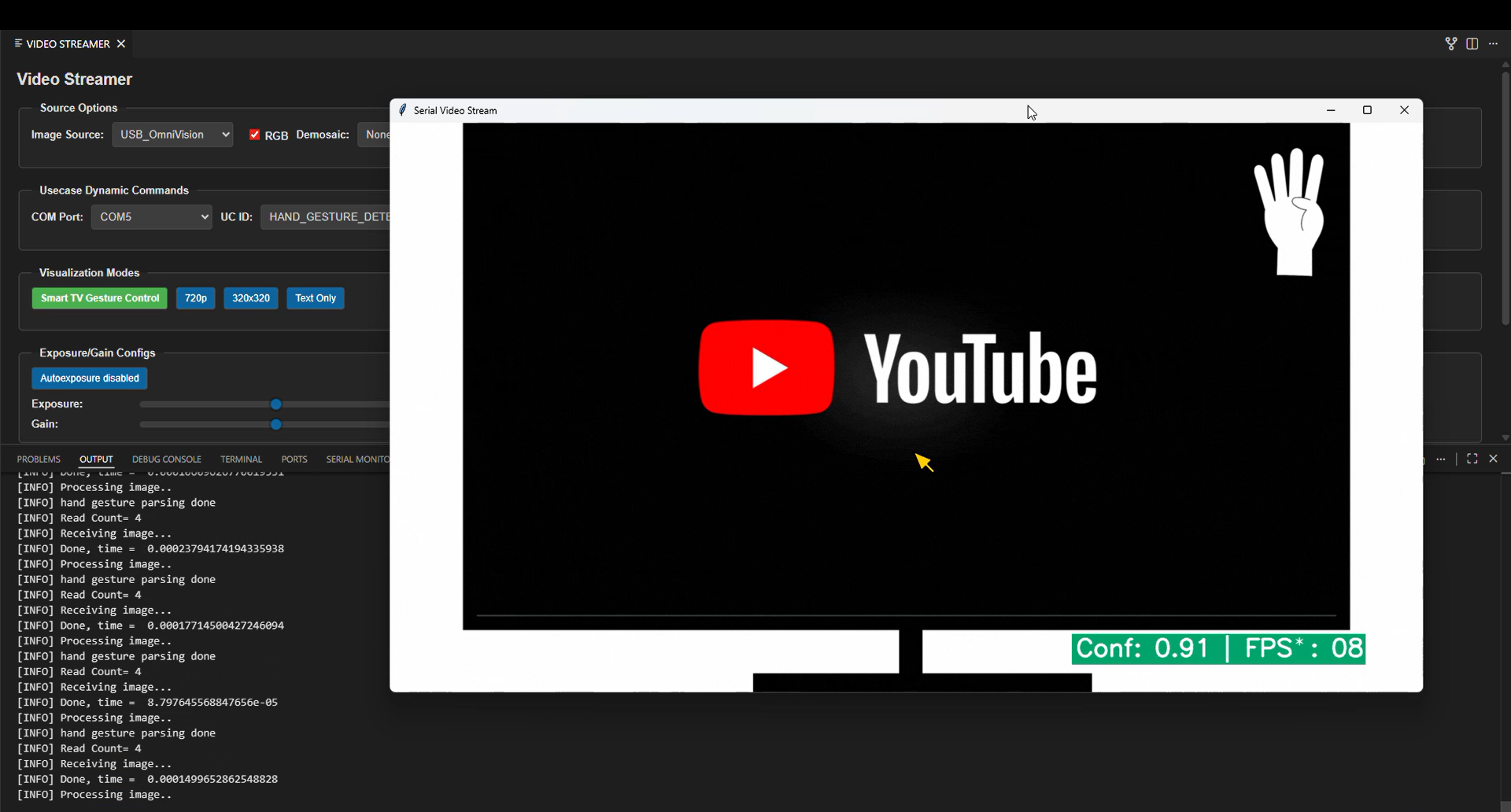

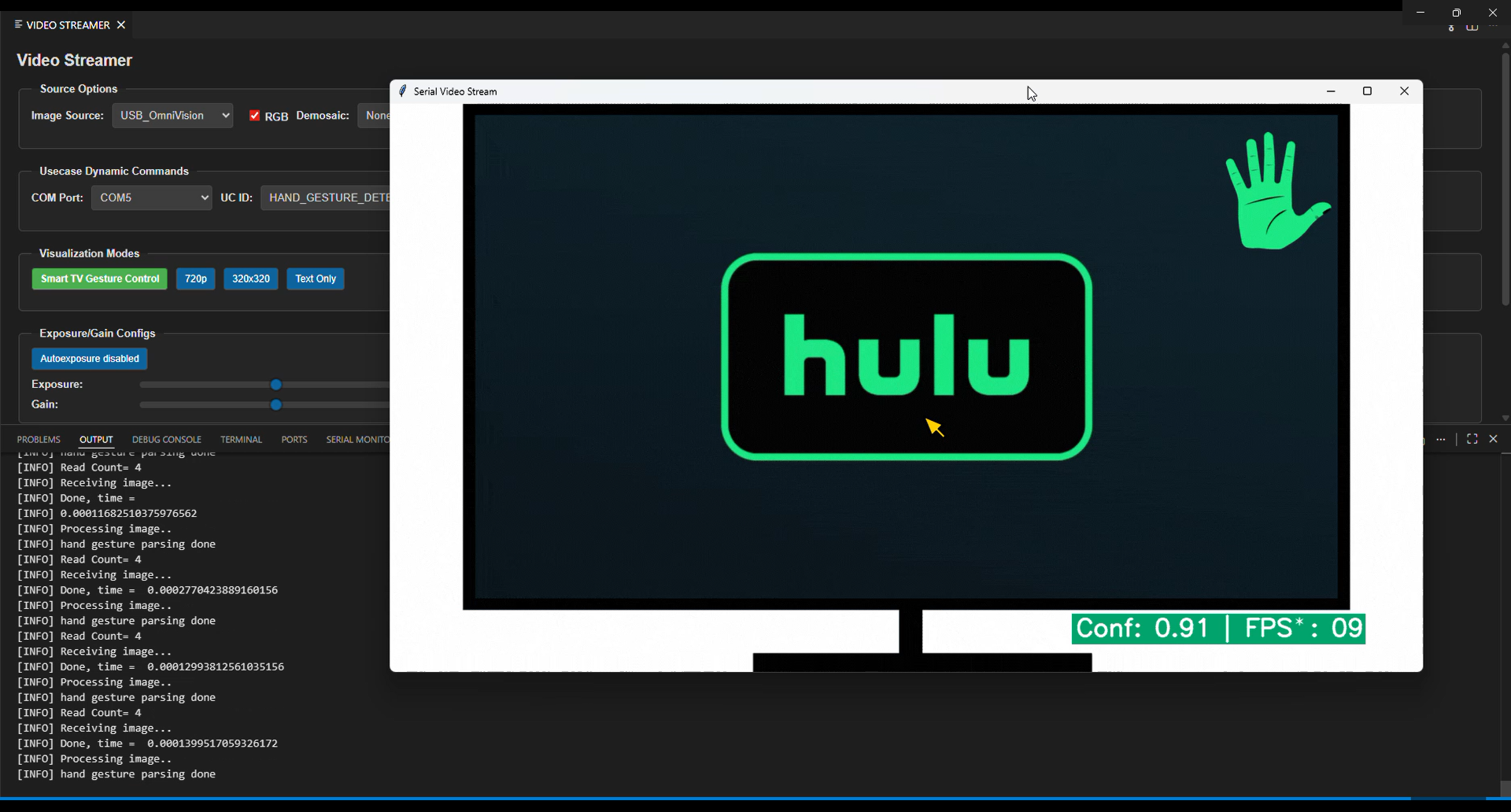

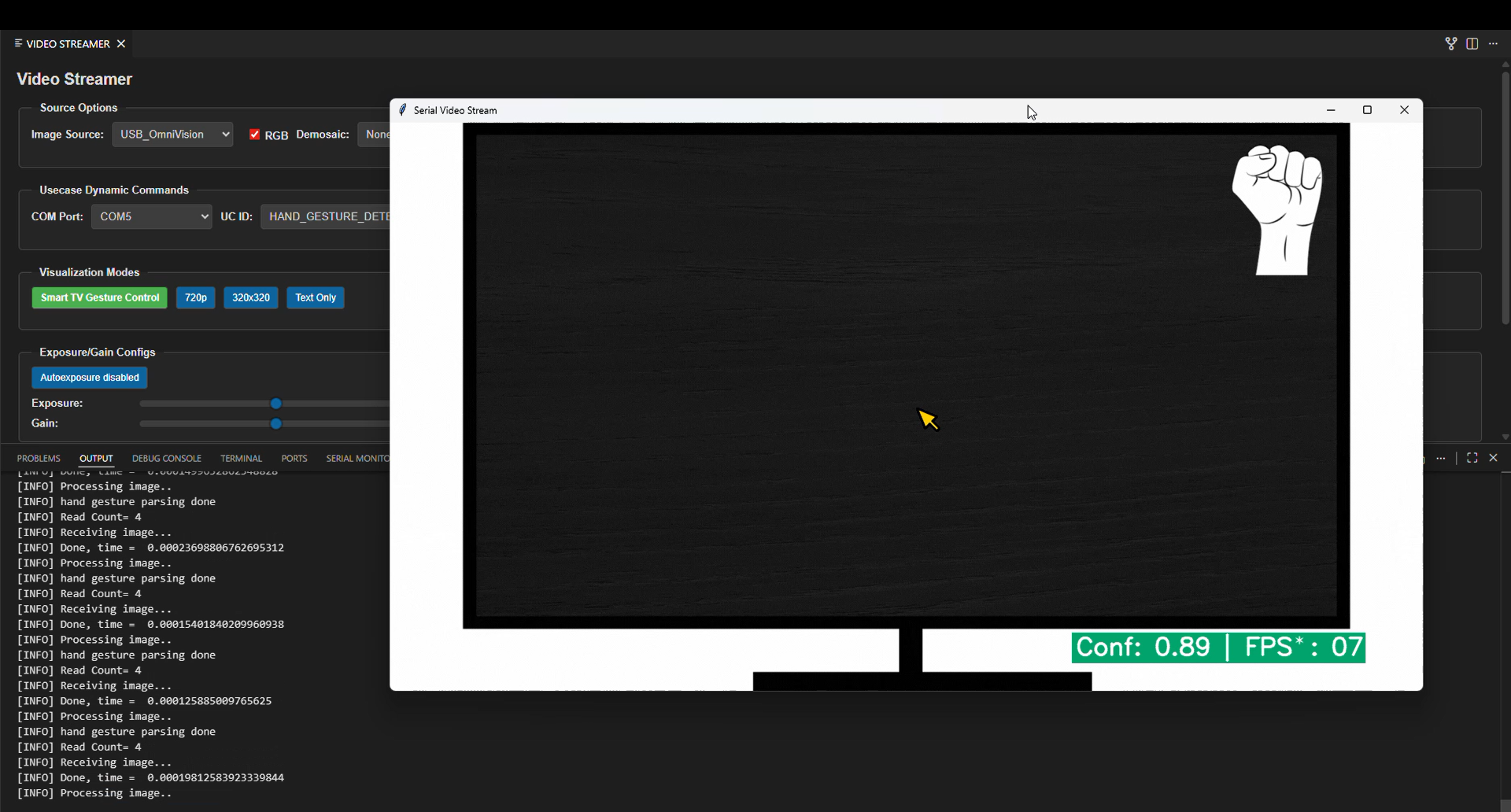

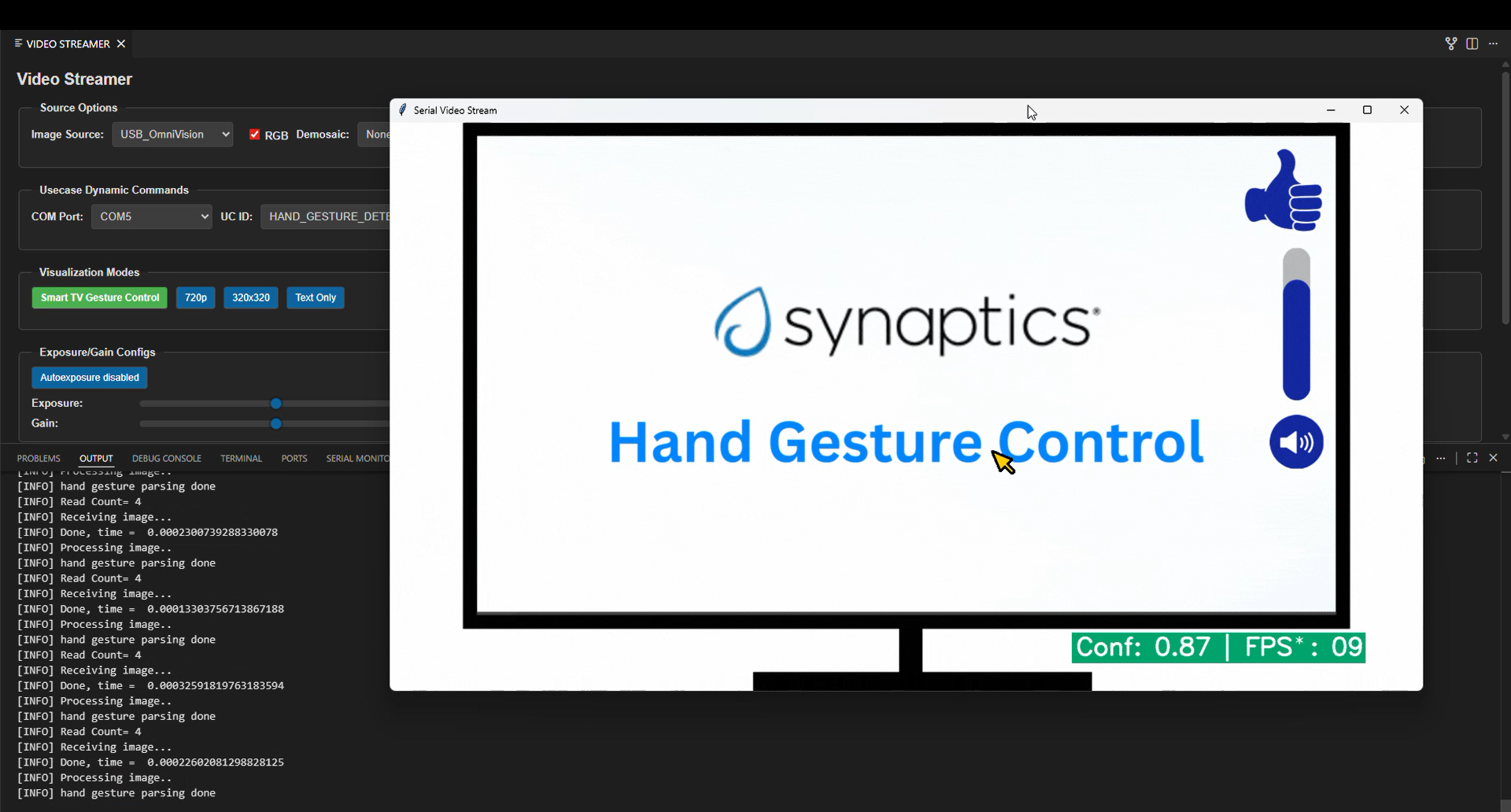

Change Visualization Modes as needed:

Smart TV Gesture Control

720p

320x320

Text only

Autorun use cases: If autorun is enabled, click Connect Image Source to open the video stream pop-up.

Supported Hand Gestures

The following hand gestures are supported:

Gesture |

Description |

|---|---|

One |

One finger raised (index finger). |

Two |

Two fingers raised. |

Three |

Three fingers raised. |

Four |

Four fingers raised. |

Five |

Open palm with five fingers raised. |

Fist |

Closed fist with fingers folded inward. |

Thumbs Up |

Thumb raised upward with other fingers folded. |

Thumbs Down |

Thumb pointed downward with other fingers folded. |

Pinch |

Thumb and index finger brought close together (pinching pose). |

Gesture one

Gesture two

Gesture Three

Gesture four

Gesture five

Gesture fist

Gesture Thumbs Up

Gesture Thumbs Down

Gesture Pinch